Faces, credit cards, birth certificates - How private data could end up in AI training sets (and no one stopped it)

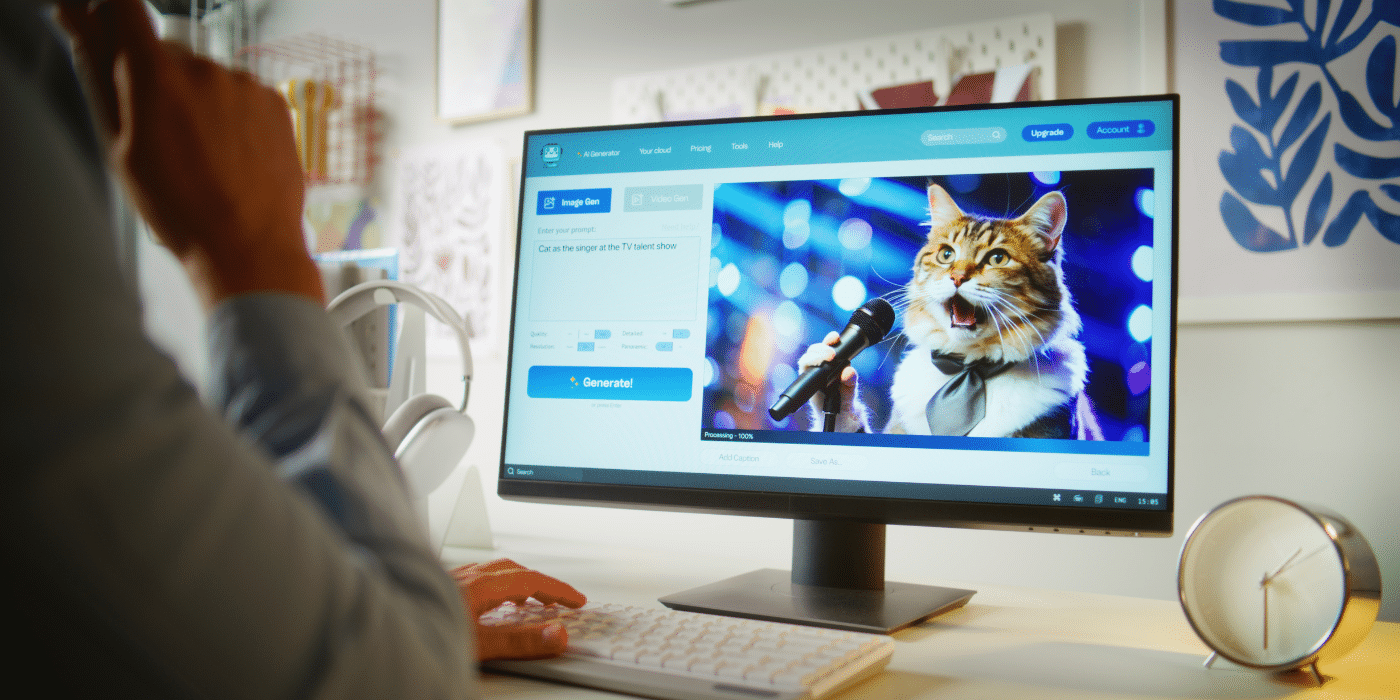

AI eats everything - including your personal data

What happens to the data that we unsuspectingly upload to the Internet? Researchers have now shown that the answer is more frightening than many thought: It ends up in the training of artificial intelligence - without consent, without control and often without protection. According to a new study, the DataComp CommonPool dataset, a gigantic collection of over 12 billion image-text pairs for AI models, contains a massive amount of private and sensitive information.

This includes: Faces, health data, credit card pictures, job application documents - even children's photos and birth certificates. The researchers found this content in just 0.1% of the data set. This extrapolates to hundreds of thousands to millions of identifiable cases that have long been stored in AI systems such as image generators. And the worst thing: the damage is already done - can it be undone? Almost impossible.

Publicly accessible is not synonymous with permitted

The data in CommonPool comes from freely accessible websites that have been mined by automated web scrapers - some since 2014. The creators of the dataset claim it is for research only, but the license does not prohibit commercial use. This opens the door for companies to feed their AI with this data - possibly including your personal data.

Many people have posted their content online in good faith, for example on job application portals or family blogs. They did not expect that an AI model would later analyse their documents, recognize faces or link places of residence with health data. And this is precisely the core problem: the internet is not a self-service store for AI.

Filters that don't work - and a law that lags behind

The operators of CommonPool have supposedly built in protective measures - such as facial recognition and masking. The reality is that the algorithms do not make all faces unrecognizable. Emails, ID numbers, addresses? Hardly filtered either. Why? Because it is technically difficult - according to the researchers themselves. But is that an excuse?

Even if data subjects knew that their data was being used - which is unlikely - and they demand that it be deleted: The trained AI model remains in place. It learns from the data - and this knowledge cannot simply be "deleted". The legislator? Slow to react. In Europe there is the GDPR, in the USA there are local data protection laws - but many data set creators fall through the cracks because they are small or research-oriented.

What does "public" mean? A misconception with consequences

A key misconception in the AI community is that what is publicly visible on the internet is free to use. But this is a dangerous fallacy. As the study shows, "publicly accessible" often includes extremely private content that was never intended for such purposes - from birth certificates to private family pages. The researchers are now calling for a rethink in the industry. And urgently.

Negligent - and extremely dangerous.

The AI world often thinks: "What's online belongs to everyone." Wrong thinking! If my face or credit card number appears in an AI model, it's not a technical glitch - it's a clear violation of my rights.

The fact that huge data sets full of personal information are circulating online while legislators and supervisory authorities are in a deep sleep is a digital scandal. And the "filtering is difficult" argument? Sounds like: "I drive too fast because my car has no brakes."

We say: Such data sets should be taken off the internet immediately. Anyone who uses private data without asking should not be researched - they should be held accountable. Period.

Want to make sure your personal data is protected? Book a consultation with our data protection law experts now!